Lia Agents

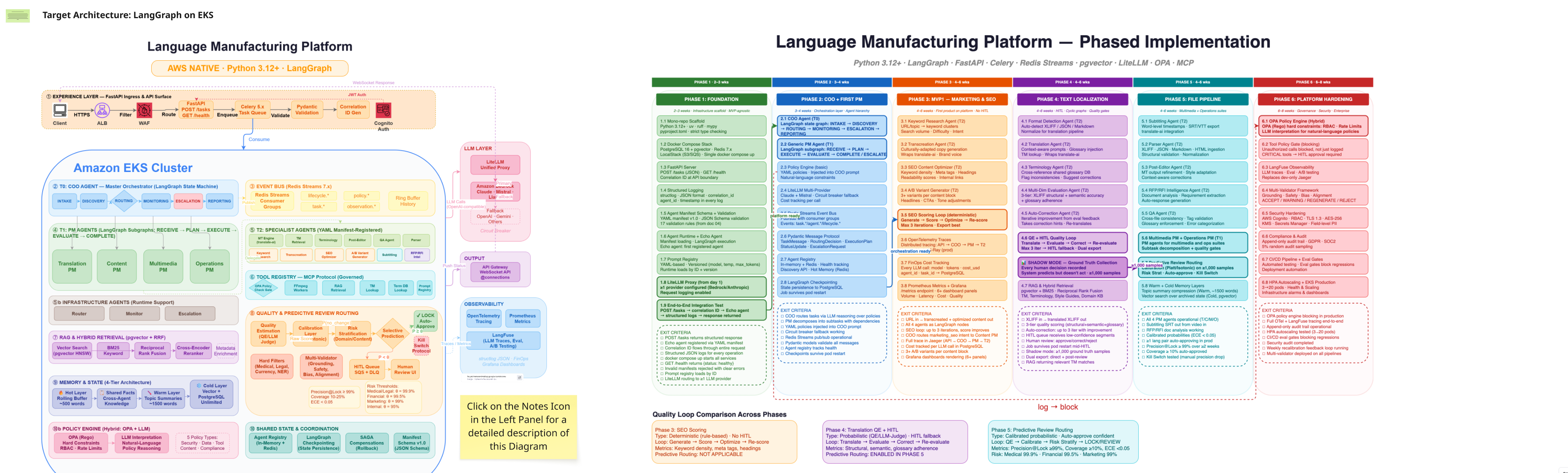

Research, architecture, and stakeholder enablement for a multi-agentic AI platform.

This case study is gated. Enter the access key to view Lia Agents in full. Access persists for the rest of this session.

Research, architecture, and stakeholder enablement for a multi-agentic AI platform.

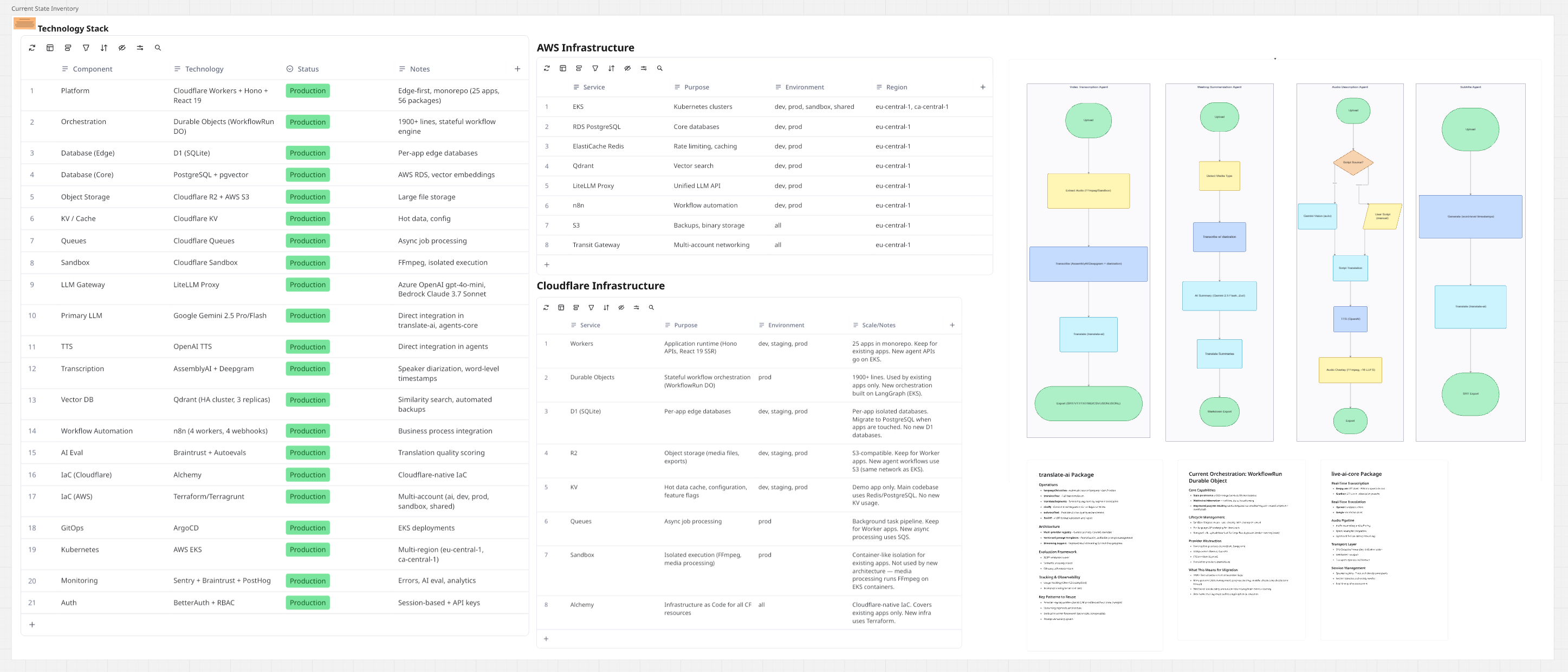

Lia Agents is the technological foundation of Acolad's AI transformation. Pedro went beyond traditional UX scope to become a bridge between product, design, and engineering. He authored architectural documentation, built a 200+ term technical glossary, conducted a three-phase UX research programme, led strategic presentations, and reverse-engineered system prompts to understand how AI agents behave.

The platform's technical complexity was a communication barrier. Engineering spoke in terms of vector stores, policy engines, and orchestration patterns. Product spoke in terms of user outcomes and business metrics. Leadership needed plain-English explanations to make investment decisions. Design had to translate between all three.

Simultaneously, the competitive landscape was evolving weekly. Claude, ChatGPT, Perplexity, Grok, and Gemini were all shipping new agentic features. The team needed a systematic way to benchmark, compare, and learn from the competition's UX decisions.

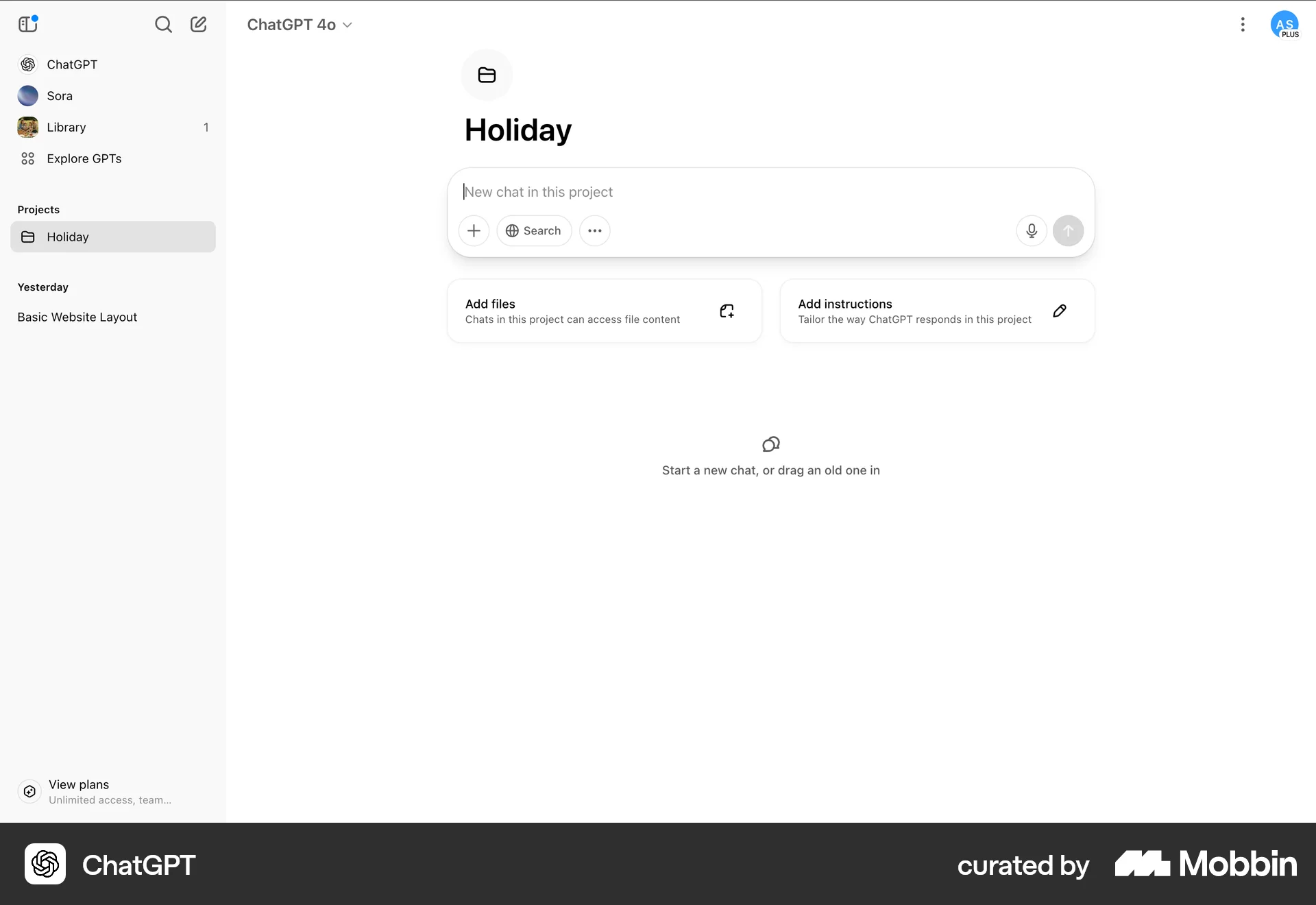

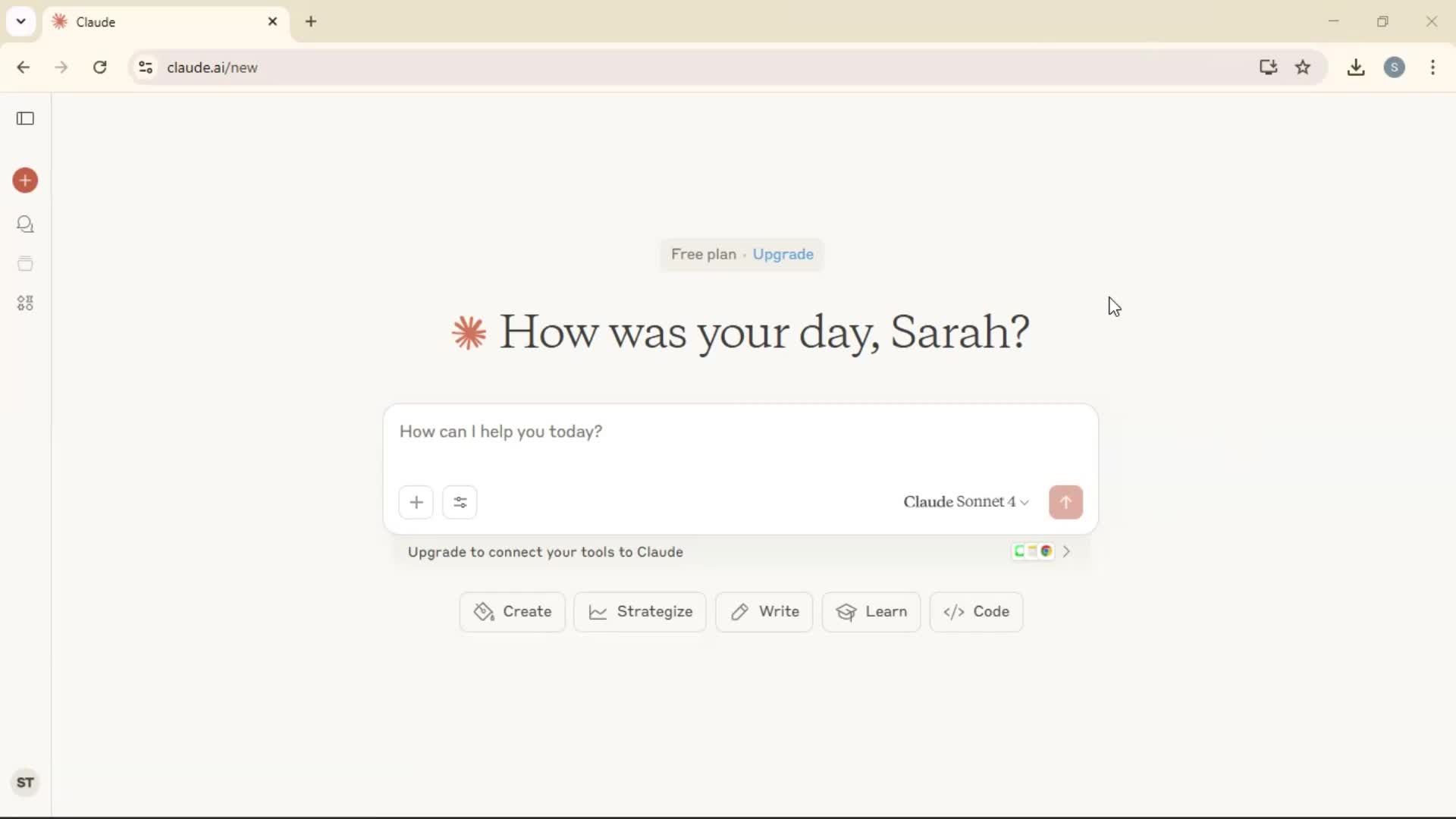

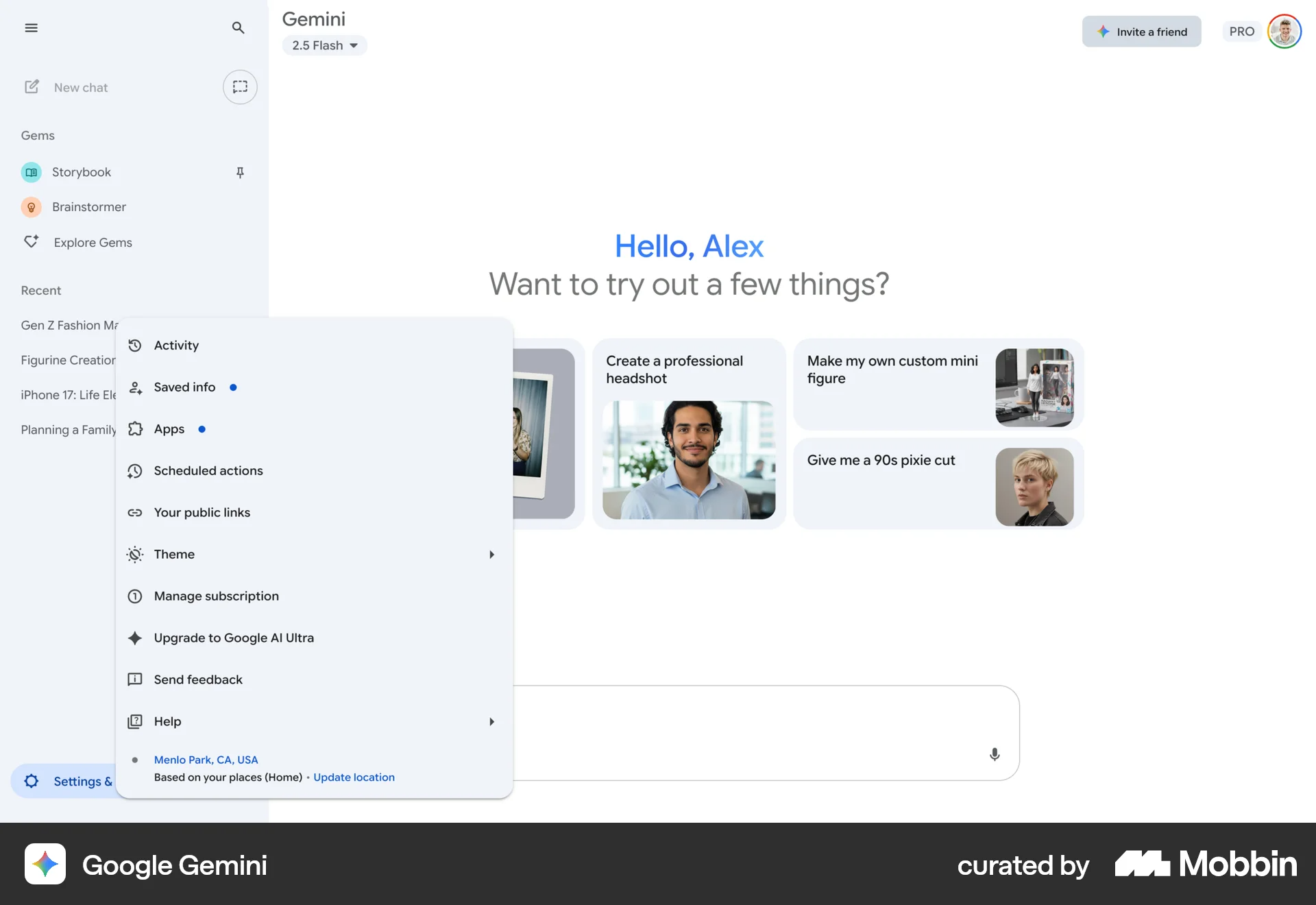

Three-phase programme: (1) Deep assessment of conversational vs hybrid vs UI paradigms for agentic AI, (2) Global LLM and agentic landscape analysis, (3) Detailed UX benchmarking of 5 AI platforms — Claude, ChatGPT, Perplexity, Grok, Gemini.

Systematic UX analysis of each platform: interaction models, settings architectures, onboarding flows, error handling, collaborative features. Documented historical vs current versions.

Authored a 200+ term technical glossary translating concepts (A2A, agent registries, policy engines, quality gates, observability stacks) into plain English. Led team presentations. Facilitated workshops. Wrote ADRs for event bus, vector store, policy engine, task queue, agent orchestration, LLM provider strategy, memory architecture.

Embedded in the engineering codebase. Reviewed Terraform configs and Kubernetes manifests. Reverse-engineered system prompts from agent definitions. Documented prompt design patterns and their UX implications.

The 200-term glossary was the single highest-leverage deliverable of the entire engagement. It unlocked conversations that had been stalling for weeks. When everyone shares the same vocabulary, design decisions happen in minutes instead of meetings. — Pedro Rodrigues

Quantitative outputs from foundational research, architecture documentation, and stakeholder enablement.

This was the deepest level of design-engineering integration in my career. Reviewing Terraform configs and Kubernetes manifests isn't traditional designer territory, but understanding the infrastructure made every design decision better. The glossary proved that stakeholder enablement is a design deliverable, not a side activity.

Next time: start with the glossary on day one. Don't wait for confusion to accumulate before investing in shared vocabulary. And couple the research programme more tightly to sprint commitments so insights translate to shipped features faster.